Last year, Synchron drew global attention when it paired its implanted brain-computer interface with Apple Vision Pro, allowing a person with ALS to control the headset without using their hands or voice. But Synchron is not the only BCI company using Apple’s headset as an access layer for people with physical impairments. Cognixion is now advancing a parallel effort from the non-invasive side of the field, using EEG-based brain readings to turn the Vision Pro into a communication tool for people who may have lost speech and movement but not cognitive intent.

Cognixion, based in Santa Barbara and led by founder and CEO Andreas Forsland, develops non-invasive brain-computer interface systems for people with complex communication and mobility impairments. Its platform combines EEG sensing, assistive communication software, and AI-based personalization to help users interact with digital environments without relying on conventional speech or motor control. In October 2025, the company launched a clinical study integrating its EEG-based system with Apple Vision Pro, extending its communication technology into a widely recognized spatial computing platform.

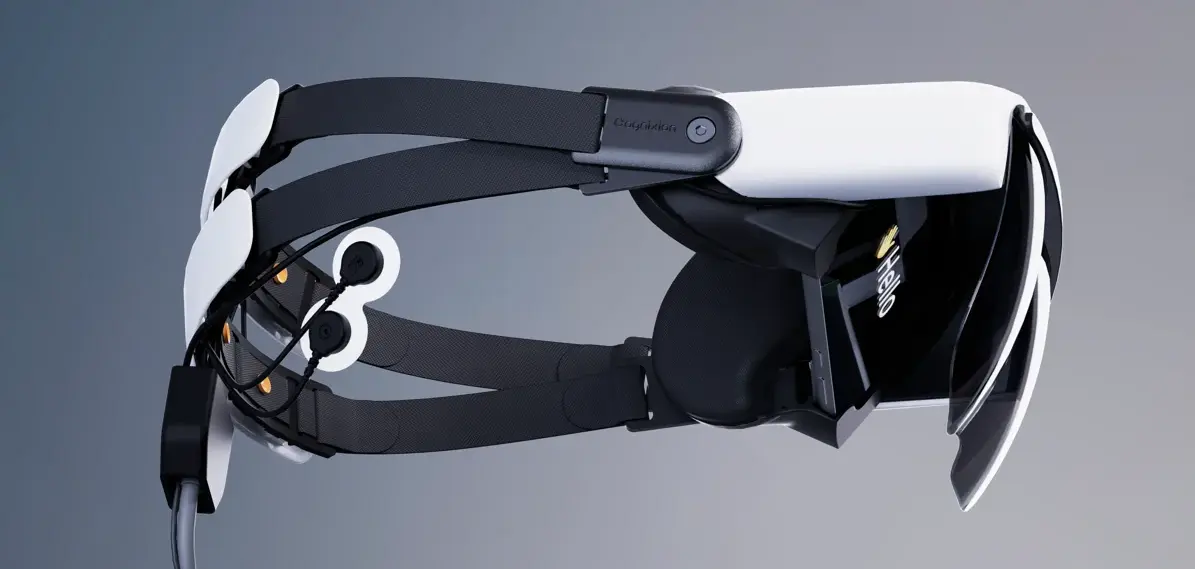

What makes Cognixion’s approach notable is that it does not rely on surgery. Instead of cutting into the brain, the company built a BCI that works from the outside of the head. It reads electrical signals through the scalp using EEG. Their flagship system, called Axon-R, uses six to eight EEG sensors positioned over the regions of the brain responsible for processing visual information and spatial attention.

These sensors detect steady-state visually evoked potentials. When a person focuses their mental attention on a flickering visual stimulus on a screen, the brain generates a measurable electrical response at that exact frequency. By monitoring these responses in real time, the system can decode where a person is looking and what they intend to select, all without them moving a single muscle.

This is where the Vision Pro integration becomes important. Instead of replacing the entire interface, Cognixion swaps out Apple’s standard headband for one embedded with its EEG sensors. The spatial computing display becomes the visual stimulus. An external neural processing unit, worn at the hip, handles the computation of translating raw brain signals into commands. The combined system then leverages Apple’s existing accessibility architecture, including eye tracking and dwell control.

What separates this from earlier attempts at brain-computer interfaces is the addition of artificial intelligence. Cognixion’s system feeds those signals into a large language model trained on each user’s communication patterns, including how they speak, their writing history, and their preferred expressions. During clinical trials, participants provided emails, texts, and voice recordings so the system could better reflect their individual voice.

When the user focuses on visual options, the AI generates contextually appropriate suggestions that sound more like them. Earlier Cognixion systems were purpose-built communication devices, but integrating with Apple’s spatial computing platform gives users access to a much broader digital environment, including email, web browsing, and social media.

The Vision Pro already includes accessibility-oriented architecture, with built-in eye tracking, head tracking, switch control, and compatibility with external hardware. Cognixion’s EEG module becomes another input within that system. That makes the partnership more than a hardware pairing. It places Cognixion’s non-invasive BCI inside a broader computing environment already built with accessibility in mind. The current clinical trial is testing that approach in participants living with ALS, stroke, or traumatic brain injury, while also measuring how much AI-based personalization improves the user experience.

The company has also attracted outside validation. Cognixion has raised $25 million to date. Its 2021 $12 million seed round was led by Prime Movers Lab, with participation from the Amazon Alexa Fund and others. It received FDA Breakthrough Device designation in May 2023, placing it on an expedited review pathway. Its inclusion in TIME’s Best Inventions of 2025 added another layer of visibility, particularly as a recognition for a wearable, non-invasive brain-computer interface. Together, those milestones suggest growing institutional confidence in the company’s approach.

CEO Andreas Forsland has repeatedly framed the company around speed, safety, and practical use. “Our goal is to unlock the potential of the human mind by providing a seamless interface that restores the fundamental right to communicate and connect with the world around us, without the need for surgery,” Forsland said.

Earlier trials point to the practical significance of that effort. Participants achieved communication speeds approaching normal conversation, and one patient who had been locked in for nearly a decade was able to answer questions and tell jokes. For people living with locked-in syndrome, where isolation and depression are common, that difference matters. Moving from selecting a letter every few minutes to participating in something closer to fluid conversation is not just a technical gain, but changes the quality of interaction itself.

EEG-based systems still face signal-quality constraints that invasive approaches do not. The skull weakens electrical signals before they reach surface electrodes, especially at higher frequencies, which limits fidelity compared with implanted systems. Cognixion works around that constraint through signal processing, interface design, and AI-based adaptation, but it is still operating within the limits of non-invasive sensing.

There are also practical tradeoffs. The headset weighs around 645 grams, making day-to-day comfort an important consideration for users with severe motor impairments. Cost is another barrier. Apple's Vision Pro starts at $3,500, and the Cognixion module adds several thousand dollars more. There is also the question of durability as a long-term product category. Apple may eventually build its own neural sensing capabilities, or other companies may develop lighter and cheaper systems.

The broader significance lies in what this says about the direction of neurotechnology. For many users, safety and ease of use may matter more than maximum signal quality or technical features. Furthermore, many patients with neurodegenerative disorders like ALS may not be able to wait years for more experimental surgical systems to mature. A system that works without surgery carries clear value in that context, even if it comes with technical limitations compared to it's invasive cousins.