Behind 20-foot walls of NDAs, far from public view, trillion-dollar corporations are building state-of-the-art neuroscience labs. Their neuroscientists earn six-figure salaries and receive stock option packages larger than the annual budgets of some local university labs. Yet these talented teams are not working on the great brain challenges of our time. Instead, they are mapping the brain for a wide range of commercial purposes.

And that is not always a bad thing. Apple has teams working on new ways to interact with its devices to increase accessibility, from exploring EEG in AirPods to recognizing silent speech. Samsung appears to be moving in a similar direction, with substantial research into neuro-adjacent features. Even Nike has a neuroscience lab, studying how the brain responds to different shoe designs.

Yet increasingly, a tension arises between corporate incentives and neurotech research. Last month, Meta released its TRIBE brain foundation model, designed to map video, audio, and language stimuli to fMRI responses, just one day after losing a landmark case alleging that its products rely on algorithms engineered to be addictive. While earlier this year, OpenAI, under pressure to become profitable, invested millions in a new brain-computer interface startup, with a stated mission to build a new interface layer for AI.

Some of the world’s largest corporations, investing the most heavily in brain science, do not simply sell products. They profit from attention. And with those incentives firmly in place, can Big Tech be trusted with the growing toolkit of neurotechnology?

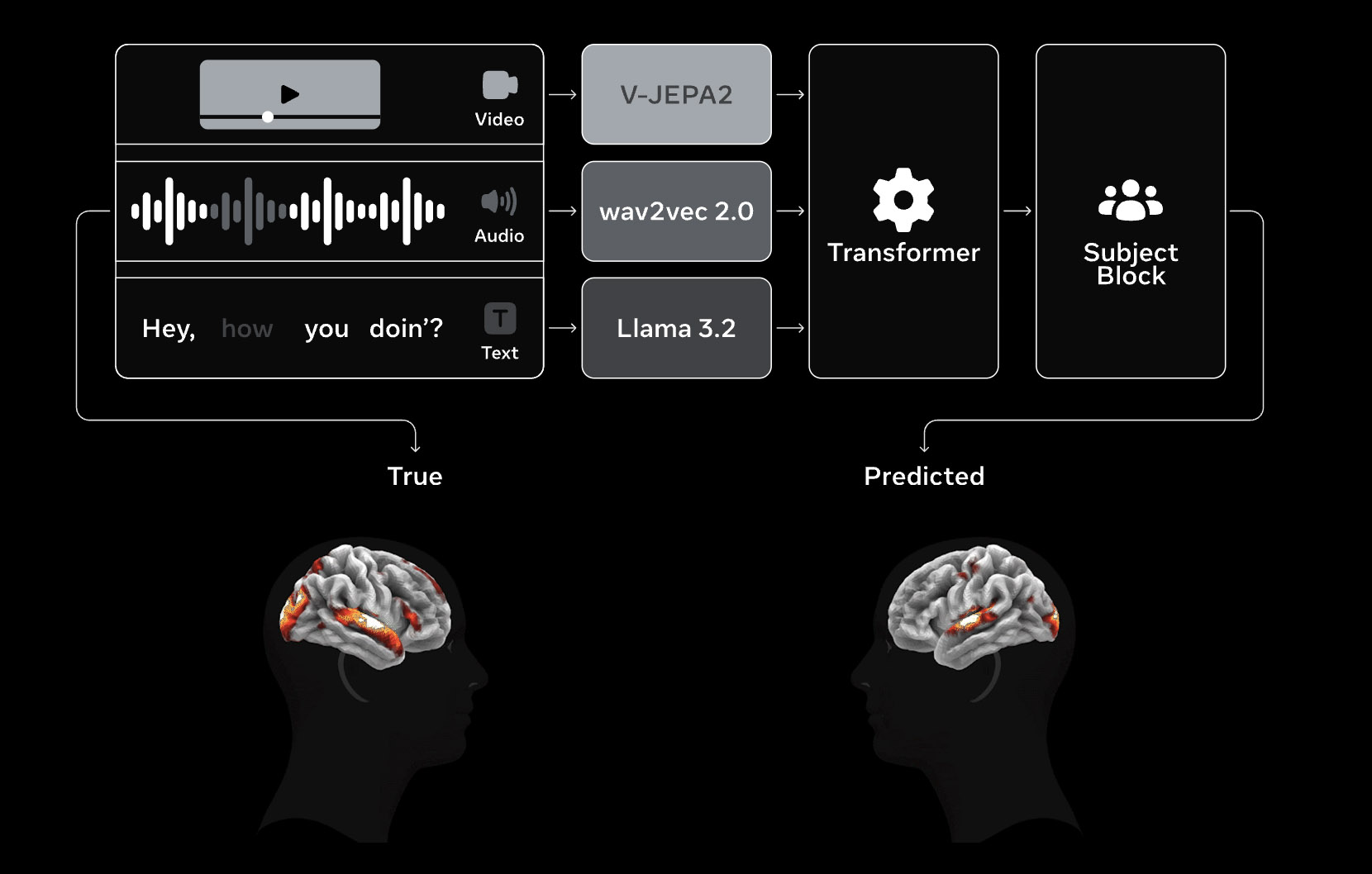

Meta’s Fundamental AI Research group, FAIR, introduced TRIBE v2 on March 26. The tri-modal brain foundation model is designed to predict human fMRI responses to video, audio, and language. It was trained on more than 1,000 hours of fMRI data collected across 720 subjects. According to FAIR, the model outperforms an optimized linear baseline and can reproduce classic neuroscience findings in simulation. Meta released the model as a full research package, including code, model weights, public demos, and a technical paper, positioning it as infrastructure for the neuroscience research community rather than as a one-off release.

TRIBE is not FAIR’s first brain-related release. Meta’s well-funded research group has spent several years publishing work on narrower brain-decoding problems before moving toward broader whole-brain modeling. In 2022, Meta published work on decoding perceived speech from non-invasive brain recordings. In 2023, it published a system for decoding visual representations from MEG. And in 2025, it introduced Brain2Qwerty, a model designed to decode sentence production from EEG and MEG. TRIBE extends that trajectory from more bounded decoding tasks toward a more general model of brain response.

That track record reflects FAIR’s open research posture, as communicated by Meta. The work is framed as research for the broader scientific community, with outputs released in structurally open form. But Meta has also invested in a more explicitly commercial strand of neuroscience. In 2019, the company acquired the New York startup CTRL-labs and folded its work into Reality Labs. Founded in 2015, CTRL-labs was built around a new generation of non-invasive neural interfaces. After four years of operation, the company was acquired in a deal widely reported to fall somewhere between $500 million and $1 billion.

The acquisition came as Meta was starting to look more seriously at new interface paradigms and at how to future-proof its platform strategy. User growth had begun to mature, and the company was investing heavily in AR and VR as ways to make computing more immersive and more persistent throughout daily life. Reality Labs spent the following years developing interface technologies aligned with that vision. That work culminated last September with the launch of the Neural Band. The wrist-worn EMG device measures neuromuscular signals linked to intent and allows users to navigate AR environments through subtle hand gestures, alongside Meta’s Ray-Ban Display glasses.

And so Meta’s neuroscientific ambitions appear to split along two axes. Open research is housed within its Fundamental AI Research group, FAIR. More explicitly commercial interface work sits within Reality Labs. In public materials, those tracks are presented as separate, with distinct incentives and little visible overlap. So while FAIR may produce increasingly capable brain models, there is, at least publicly, no indication that this work is directly feeding the neural interface programs now emerging from Reality Labs.

.webp)

Unfortunately, Meta has a poor record when it comes to earning public trust. Over more than a decade of controversy, from the Cambridge Analytica scandal that engulfed the company in 2018 to a record $5 billion FTC privacy settlement and U.N.-linked scrutiny over Facebook’s role in Myanmar, Meta has built a reputation as a company willing to mislead users, test institutional limits, and defend harmful systems for too long.

That history came back into focus on March 25, when a Los Angeles jury found Meta and Google liable for negligence in a youth-harm case centered on addictive platform design. The case argued that Instagram and YouTube were built and operated in ways that harmed a young user’s mental health, and that the companies failed to provide adequate warnings about those risks.

The verdict did not force either company to publicly explain in full how such engagement systems are engineered. But it pushed a central question closer to the surface, when companies are repeatedly accused of optimizing for compulsion, what should the public make of their growing investment in the science of cognition and behavior?

TRIBE and FAIR are kept at a careful distance from that debate. But Meta has shown before that it is willing to mix cognitive science with commercial incentives. In 2014, then-Facebook faced widespread backlash after researchers published the results of an emotional contagion study conducted on nearly 700,000 users, treating user affect and behavioral response as variables that could be influenced at scale without informed consent. Set against that history, some of the conceptual distance between TRIBE’s scientific framing and Meta’s commercial record starts to look less comfortable than the company would prefer.

Meta is not the only company operating along a fine ethical line where neurotechnology meets the fiduciary duty to increase shareholder value. Earlier this year, OpenAI announced that it was participating in the seed round of Merge Labs, a new non-invasive brain-computer interface company that has emerged with roughly $252 million in funding. Little is publicly known about the exact product it plans to build or the first use cases it will pursue. But the company’s name is revealing. Merge Labs explicitly refers to the idea of merging human cognition, computers, and AI into a more seamless interface.

The investment fits OpenAI’s own rhetoric. In its announcement, the company said that “progress in interfaces enables progress in computing” and described BCIs as “an important new frontier” that could create a more natural, human-centered way to interact with AI. OpenAI also said it would work with Merge on scientific foundation models and other frontier tools. This is not a vague adjacency play. OpenAI is explicitly backing the development of new neural interfaces as part of its broader vision for how people will use AI systems in the future.

Those interfaces are being framed as intentionally non-invasive. Merge says it is developing new systems that connect with neurons using molecules rather than electrodes and rely on deep-reaching modalities such as ultrasound rather than implanted brain hardware. Ultrasound remains experimental and far from a defined consumer product. But it fits a broader strategic ambition to develop scalable brain interfaces that could eventually sit outside a strictly medical context. Consumers are unlikely to accept intracranial implants anytime soon. A non-invasive route is more compatible with any long-term push toward mass adoption.

The undertone around OpenAI and Merge is different from Meta’s. There is less need to speculate about whether cognitive science might eventually be folded into commercial use. OpenAI’s position is direct. It is building one of the world’s most widely used AI products, with ChatGPT reportedly exceeding 900 million weekly active users, and it is under constant pressure to deepen usage, expand monetization, and make its systems more structurally embedded in daily life. In that context, investment in new interface layers looks like a plausible extension of the product roadmap.

The strongest similarity between Meta and OpenAI may be less about technology than about trust. OpenAI is also under growing scrutiny over the social effects of its products. In recent months, it has faced lawsuits alleging that ChatGPT encouraged suicidal ideation and amplified a user’s delusions before a murder-suicide, while state officials have raised concerns about harms to children and other vulnerable users. Those cases remain allegations, not findings of guilt. But they sharpen the backdrop against which OpenAI is now helping fund one of the largest neurotechnology rounds in recent memory.

Meta and OpenAI are clear examples of how neurotechnology is evolving to a point where it could meaningfully support corporate growth, even when that may not fully align with the interests of consumers. That leaves a series of open questions that become more pressing by the month.

The first is governance. Can a consumer technology company whose primary duty is to shareholders be trusted to develop neurotechnology in ways that remain bounded and responsible, when the same research may also have obvious commercial value in advertising, interface control, engagement optimization, or personalized AI systems?

Second, do research-only boundaries really hold for long inside firms that have a strong incentive, perhaps even a fiduciary duty, to apply those insights across their business models? FAIR presents TRIBE as open neuroscience research, and OpenAI presents Merge as a human-centered interface frontier. Both may be true. But both sit inside companies whose core logic is to make human behavior more legible, predictable, and commercially actionable.

And finally, there is a broader moral question about resource asymmetry. Should these corporations be allowed to shape the frontier of neurotechnology with levels of capital that clinical and public-interest institutions cannot realistically match? Even if Big Tech does not end up shipping the first clinically dominant neurotechnology products, it may still come to control the layer beneath them: the models, compute, data pipelines, software abstractions, and talent markets that determine what the field becomes.

Neurotechnology is now entering a stage where it is moving closer to public life. In this sensitive window, there is a need for responsible stewards who can show how these tools should be developed and used in ways that genuinely serve society. The questions above are therefore not abstract moral speculation. How seriously they are asked may help determine what role neurotechnology plays in the decades ahead.